Search Engine Optimization has been a key focus of marketers in the past decade. The race to rank first in the plethora of competing brands has got every brand working tirelessly on their website content and technicalities to follow Google’s esteemed algorithm while also trying to crack the code of how to create a better experience for users. Here in this blog there is an SEO checklist that helps you while doing a Technical SEO audit.

With 2020 bringing new expectations and forecasts for search engine marketers, most of us are wrapping up our new year audits of the websites we handle for our companies or clients. I’ve been researching new SEO findings for the new year too, and as per my observation, are a few technical SEO Audit essentials you shouldn’t miss in 2020. But before we take a look at the list, let’s talk a bit more about SEO audits and their impact on your SERP strategies.

What’s an SEO Audit

An SEO audit is an analysis of how friendly your website is in terms of search engine optimization. Audits usually include a full overview of technicalities and content that’s available on your website. Technicalities may include responsiveness, page speed, URLs, interlinking, and more; while content audits include checking keywords, reviewing heading tags, meta titles and descriptions, and more.

Why are regular Audits important?

SEO optimization isn’t a one-time task. Just like trends and campaigns change every season for each brand, search analytics also keep changing based on the new websites that keep entering the market, the Google algorithm, the new pages you add to your website, and your competitor’s marketing strategy. Considering that your market is ever-changing, you need to keep your website updated and thus, regular audits are important. They not only help you identify the changes that you need to make on your website but also provide Google with regular information on what edits are being made on your site and thus, create validity for your pages on a technical end.

But it’s important to know what are the essential features you should be auditing every time you review your website. Take a look at the SEO checklist below:

SEO Audit Checklist

1. Website Architecture

Website architecture refers to your website’s structure and overall build. The way your website is constructed is extremely important to analyze to check if there are any gaps in the architecture and how they can be fixed. Following are the few features you should be reviewing:

[su_accordion class=””]

[su_spoiler title=”Site Depth Check” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Site depth is the number of clicks each page on your website is away from the homepage. Deeper pages have low or no ranking as they’re less likely to be crawled by search spiders. Thus, a review of such pages is required from time to time considering their importance and value.[/su_spoiler]

[su_spoiler title=”Top-Level Navigation (TLN) Analysis” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Top-Level Navigation means the main menu and navigation process of a website. A good rule of thumb for TLN is that all your pages should be linked to the main menu and a user should be able to visit the pages with minimum clicks possible. And the TLN should be SEO optimized.

[/su_spoiler]

[su_spoiler title=”Footer Check” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]At times when it isn’t possible to link every page on your sitemap to your TLN, you can link it to your footer

[/su_spoiler]

[su_spoiler title=”Breadcrumb Check” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Breadcrumb trails are small menus available on top of websites that show the path to go to the homepage from the current page the user is on. Breadcrumb trails are an important aspect in all of Google’s SEO guidelines and help the user understand the link of one page with the rest of the site, making navigation easier and at times even decreasing bounce rate.

Optimizing breadcrumbs helps search engine bots in the crawling and indexing phase, and all its information is shown in the SERPS instead of in the permalink of a page.

[/su_spoiler]

[/su_accordion]

2. Findability Analysis

Findability analysis helps you determine how easy it is to find website information for both search engines and users. This may be the most important option in your SEO audit checklist and can help determine how to place information that can be easier to rank and digest by the user.

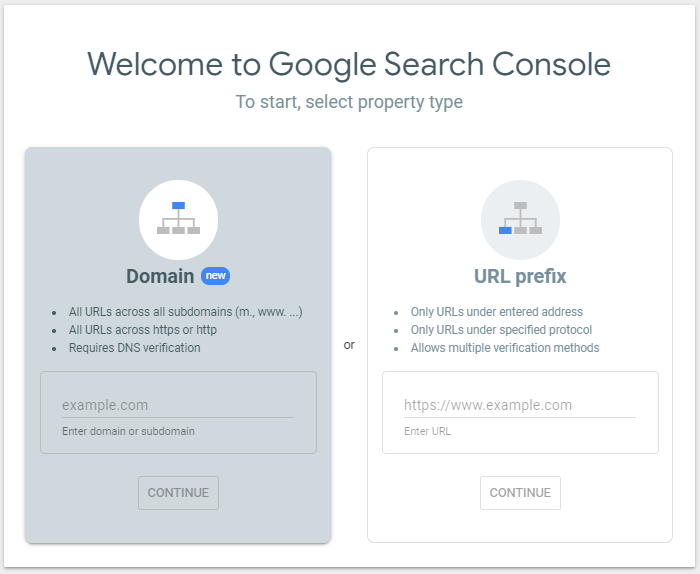

One of the most important features to look for is Indexation Status which is the number of indexed pages on your website by search bots. A low indexation rate may be due to errors like no interlinking, low Domain Authority (DA) score or others. Resolving such errors can improve performance and ranking both. You can check the indexation status of your website by visiting Google Search Console platform.

Other features to consider are XML files – that help index the visual and written content on your website, use of Pagination that are tags that help combat duplicate content and indexation issues, Custom 404 Pages that help guide users to other pages if a certain page they want to visit has been removed or edited, and more such features that help analyze the overall technicalities of your website’s content and pages.

3. URL Analysis

URL analysis helps analyze your website’s URLs to see if they’re fit for optimization or not. If you have a large website that has constant pages or content being added to it, frequent URL checks or guidelines can help prevent any mistakes or major errors.

One of the major analysis factors includes checking URL delimiters, i.e. the usage of hyphens and underscores in the links. Because underscores cause search engines to read URL strings incorrectly, it is recommended to use hyphens at all times as they’re read as spaces rather than symbols – which aren’t recommended.

Other factors to consider include URL relevancy and friendliness in terms of memorability, structure and content.

4. On-Page Analysis

One of the most important parts of website analysis is looking into your on-page SEO. Because at times a lot of team members may be involved in updating on-page content, it is crucial to understand what are the major and minor factors which should be considered in this section.

[su_accordion class=””]

[su_spoiler title=”HTML tags” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]

Your on-page SEO audit should include important HTML tags like the Meta ‘hreflang’ tag that helps Google understand which language has been used on the page, structured data markup – which is a snippet of HTML code located in the <header> tag that instructs search engines what your page is about, and <title tags> that help format page titles according to their structure in the content.

[/su_spoiler]

[su_spoiler title=”Meta Analysis” open=”no” style=”fancy” icon=”plus” anchor=”” class=””

Each web page on your website should be optimized to include meta tags that are descriptions that indicate what your page is all about. Meta tags or meta descriptions shouldn’t be more than 156 characters, and should clearly describe what your page is about without being duplicated with another page.

[/su_spoiler]

[su_spoiler title=”Key Content Placement” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Key content is the main content for which your page has been constructed. Best content practices show that your key content should be mentioned at the top section of the page, and if it’s divided in headings or tabs, each heading should indicate what content is placed where. This helps users and bots find content easily on your page.[/su_spoiler]

[su_spoiler title=”Quality of Content” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]The quality of content matters a lot when considering if it’s search optimizable or not. The better the content, the higher the chances of users visiting the page for information, and the better the ranking possibilities on search engines.[/su_spoiler]

[su_spoiler title=”Image Optimization” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Images on webpages can make or break your search strategy. Before you upload images on your page, make sure to optimize their size so they don’t hinder page load speed. And each image should have an Alt Tag that indicates what the image is about. In cases when the image doesn’t load, Alt Tags identify what the image is about, and also help in ranking images on search engine results.[/su_spoiler]

[/su_accordion]

5. SEO Equity and Link Checks

SEO equity is the power of your website and how well it’s distributed across pages. Your SEO audit report should always include an analysis of whether that equity is bleeding out or not, and how to counter that.

[su_accordion class=””]

[su_spoiler title=”Redirected URLs (302 redirect)” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]A 302 redirect shows that a certain page on the website has been removed temporarily. These redirects don’t usually pass SEO equity as when you redirect a page, it’s search value doesn’t pass through, which may lead to the search bots not indexing the page you’re taking your user to.[/su_spoiler]

[su_spoiler title=”Redirection Chains” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Having a long redirection chain (even if it’s three pages long – which is too long), may lead search engine bots to lose track of the pages and you may lose link juice due to that.[/su_spoiler]

[su_spoiler title=”Broken Redirects or 404 Links” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Broken links or redirects are bad for user experience and search ranking and should be avoided at all costs.[/su_spoiler]

[su_spoiler title=”No Follow Tags” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]The rel=”no follow” tag tells Google not to pass any equity or credit through a link, making it useless in search ranking. [/su_spoiler]

[su_spoiler title=”Malicious Links” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Links coming into your site from malicious sources – these may be saved in your website’s cache or could be an ongoing error and can have a direct impact on your website traffic, ranking and even Google ads.[/su_spoiler]

[/su_accordion]

6. Social Media and Platform Analysis

Social media platform analysis checks if your website is properly connected to your social links or not. This includes:

[su_accordion class=””]

[su_spoiler title=”Google MyBusiness setup” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]which is a massive part of SEO and can easily make your business location and website trackable on search;[/su_spoiler]

[su_spoiler title=”Facebook setup” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]and open graph snippet which allows you to control what image, description and title you want Facebook to pull when someone shares your links on the platform; [/su_spoiler]

[su_spoiler title=”Snippet SEO tools” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]for Instagram, LinkedIn, Twitter and YouTube that help you embed titles, images and descriptions from each platform to be visible on your website, showcasing activities, reviews and more.[/su_spoiler]

[/su_accordion]

7. Google Search Console Check

And finally – an analysis of Google Search Console (formerly Google Webmaster Tools) that should be a core part of your SEO analysis. These are the steps you should follow while checking your account:

[su_accordion class=””]

[su_spoiler title=”Link Google Webmaster to Google Analytics” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]to enable proper tracking of data, traffic and conversions.[/su_spoiler]

[su_spoiler title=”Internal links” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Links that connect one page of your website to another – which help users explore the website seamlessly, and establishes a hierarchy within categories.[/su_spoiler]

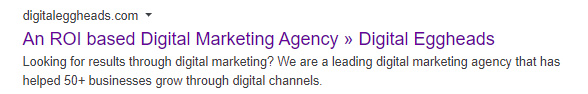

[su_spoiler title=”Sitelinks” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]Links to pages on your site shown in the meta tag area – this helps improve CTR and allow users to navigate to pages that are relevant to their search query.[/su_spoiler]

[su_spoiler title=”Blocked Pages by Robots.txt” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]The pages Googlebot doesn’t crawl because they’ve been blocked by robots.txt directives. These can disable important pages to be indexed and thus, ignored by search engines.[/su_spoiler]

[su_spoiler title=”Sitemap Indexation” open=”no” style=”fancy” icon=”plus” anchor=”” class=””]The number of pages on your site that have been indexed – allowing you to check if any URL is unaccessible or has been blocked by robots.txt.[/su_spoiler]

[/su_accordion]

That’s all – for now. The list may seem long and daunting but once you have an idea of how search engine optimization really works, it’s easier to sift through the clutter and find exactly why your website isn’t ranking as required. But to make sure that your site has the right structure and compatibility to rank on Google or any other search engine, it is important for your development and content team to understand what the main terminologies are and what pointers are they need to follow while setting up.

To read more about digital marketing, SEO and Digital Eggheads, visit our blog page here.